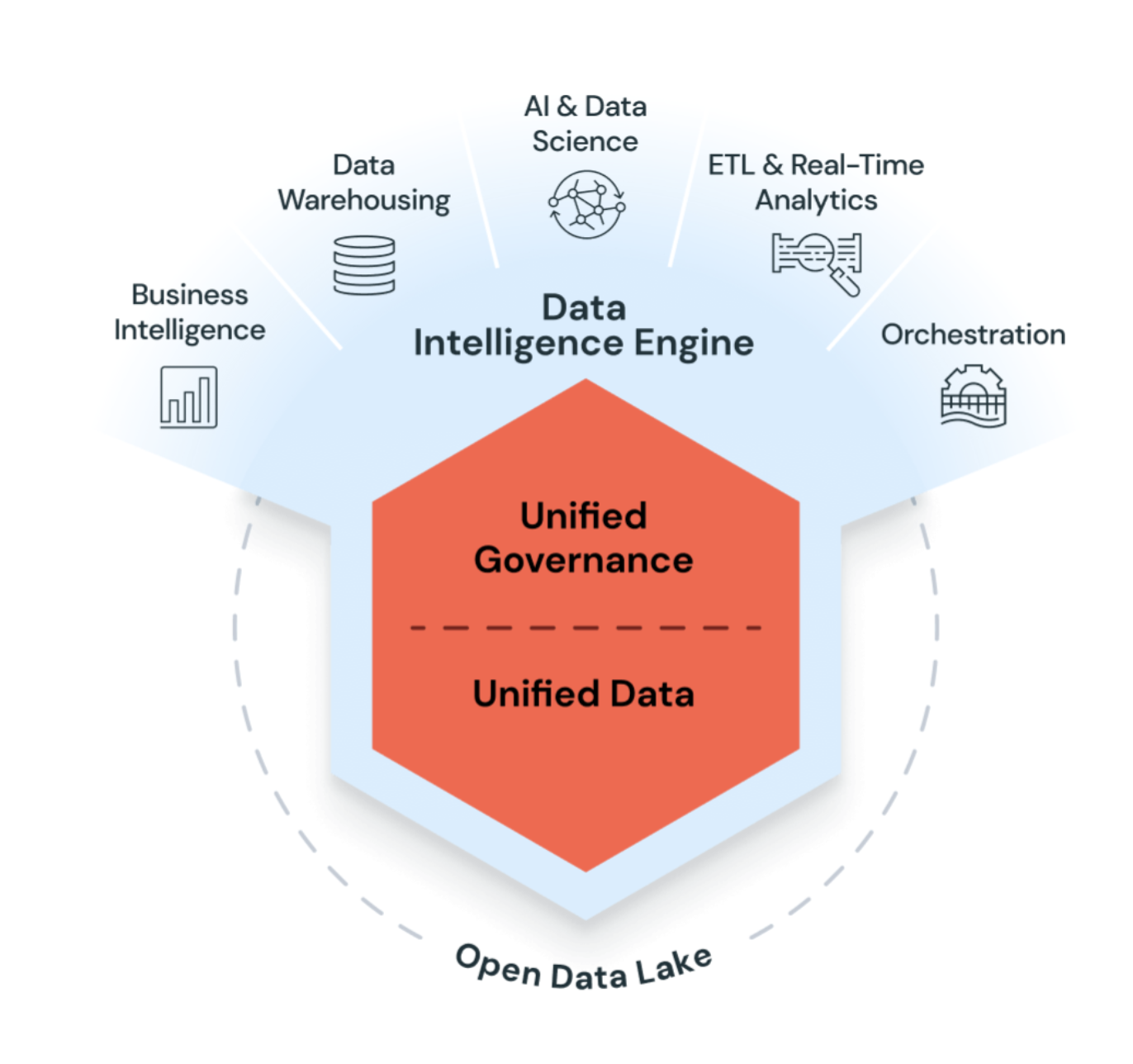

Lakehouse: warehouse power × data‑lake scale

The best of both worlds in one platform.

Unify analytics, AI, and streaming on a single, open platform. The Lakehouse sits on Delta Lake—an ACID‑compliant storage layer that turns cloud object storage into a high‑performance engine for structured, semi‑structured, and unstructured data. One security and governance model spans every workload and cloud, eliminating silos while cutting cost and complexity.